I want to say something that most people in our industry know but rarely say out loud in a client meeting: the energy model we produce at planning stage is probably wrong. Not slightly wrong. Meaningfully, significantly wrong — and we all know it and keep producing them anyway, because the regulation asks for a number, not an accurate number.

That’s an uncomfortable truth to sit with. But I’d rather we talk about it honestly, because the alternative — continuing to treat planning-stage models as if they predict future performance — is quietly undermining the credibility of sustainable design and eroding client trust every time a building’s actual bills arrive.

How Wrong Are We Talking?

Studies have found an average performance gap of around 34% across 62 UK buildings, while actual electricity use in schools and offices has been measured at 60–70% higher than predicted. In extreme cases, depending on building type, modelling assumptions, and climate context, the energy performance gap can range from 20% to as high as 550%.

Let that sink in. Not 5% off. Not a rounding error on a meter read. Half again as much energy consumed as the model said would be consumed — and in some cases, more than five times as much.

Real projects bear this out. A corporate headquarters delivered in Amsterdam to BREEAM Excellent standard was subsequently measured at more than 50% above its design energy target, with extended operating hours, plug load underestimation, and control sequence drift all contributing. A LEED Gold office campus in Chicago, valued at over $120 million, was modelled using standard occupancy profiles and achieved its certification — only for the tenant’s actual 16-hour operating day to push real consumption nearly double the model’s prediction, with EnergyPlus used as the simulation engine. These aren’t failures of bad firms doing bad work. They’re the predictable consequence of a system that rewards design-stage prediction over actual measured performance.

Buildings with simple heating and ventilation systems and clear operational routines perform closest to expectations, while those with complex HVAC systems and continuous use show the largest gaps — driven by extended operating hours, control system inefficiencies, and fragmented contractor coordination.

Why This Keeps Happening

The gap exists because early-stage models use assumed occupancy patterns, generic equipment loads, and idealized control strategies that bear no resemblance to how buildings actually operate. A model assuming 8-hour occupancy in an office that runs 18 hours will always underpredict consumption dramatically. The fundamental problem is the misconception that a simulation model of reality can predict the future — and the difficulty of modelling users is perhaps the most intractable element of that challenge.

The root causes span all three stages of the building lifecycle: design issues including inappropriate assumptions and uncertainty not considered; construction issues including poor management and lack of communication; and operational issues including fragmented accountability and control sequences that were never properly commissioned.

The firms producing more accurate predictions are doing two things differently: calibrating models against metered data from comparable completed buildings rather than CIBSE defaults, and running probabilistic sensitivity analysis across occupancy and equipment load ranges instead of single-point estimates.

But is that calibration data actually accessible? The answer is: more than most people realize — if you know where to look. The UK government’s Energy Performance of Buildings Register holds energy use, floorspace, emissions and efficiency ratings for over 40,000 public buildings in England and Wales visited by the public, all freely downloadable. Carbon Buzz, a free online tool created as a collaboration between CIBSE and RIBA, enables users to record, share and compare the energy use of their building portfolios — and to track operational energy use against design assumptions, with building information shareable by name and practice or anonymously if considered sensitive. The Built Environment Carbon Database (BECD), launched by BCIS, is a free-to-access online data repository designed for the industry to share carbon data and learn from each other — structured into databases for both asset-level and product-level emissions.

The honest caveat: these databases are strongest for public sector buildings. Private sector operational data remains much harder to access — which is precisely why practices that build their own internal post-occupancy libraries have a genuine competitive advantage in producing calibrated models.

So What Do We Actually Do About It?

This is where I want to shift the conversation — because complaining about the problem without proposing solutions is just venting. And the good news is that the tools and methodologies to genuinely close this gap now exist. They just haven’t been standardized across practice the way they should be.

Here’s a process worth standardizing across the AEC industry:

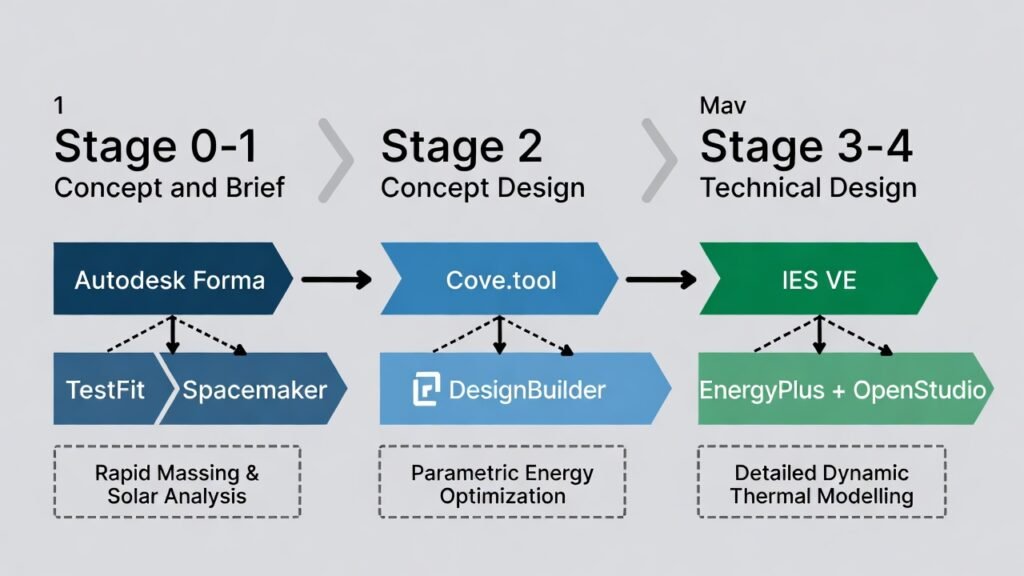

Stage 0–1 (Concept and Brief): This is where rapid massing, solar analysis, and orientation studies should happen — before a single detailed model exists. Three tools sit at this stage. Autodesk Forma uses a web-app interface to give designers real-time environmental feedback on massing decisions, with bidirectional integration with Revit so data carries forward rather than being re-entered. TestFit offers AI-driven generative design for rapid site feasibility, particularly strong on residential and mixed-use massing. Spacemaker (now integrated into Forma) pioneered the AI-driven microclimate analysis approach. At this stage, the goal isn’t a precise number — it’s understanding the design’s sensitivity to key variables: orientation, glazing ratio, shading strategy.

Stage 2 (Concept Design): Introduce Cove.tool for parametric energy optimization. It ingests BIM model data, climate data, and envelope options, then runs through thousands of scenarios in minutes rather than days — with firms reporting reductions in predicted energy use of up to 20% after a single round of AI-guided iterations. A strong alternative is DesignBuilder, which offers a more engineering-focused parametric approach with deep EnergyPlus integration. Critically at this stage: calibrate your inputs against metered data from comparable completed buildings of the same typology — not CIBSE defaults. CIBSE TM54 provides a step-by-step methodology for evaluating operational energy at every stage of the design and construction process, updating through concept design, detailed design, construction, commissioning, handover and into occupation — with the design-stage model intended as a living document rather than a one-time compliance submission.

Stage 3–4 (Technical Design): Move to IES VE for detailed dynamic thermal modelling — still the industry standard for high-fidelity simulation. A key strength of TM54-aligned modelling is its comprehensive scope, encompassing both regulated energy loads such as space heating, cooling, fans, pumps, and lighting, and typically unregulated loads including office equipment, IT servers, lifts, and catering — with clear differentiation between base building and tenant-specific consumption for mixed-use projects. EnergyPlus with OpenStudio is the strong open-source alternative — particularly relevant for firms working across UK and US markets, as it underpins both ASHRAE compliance workflows and LEED energy modelling. Run sensitivity scenarios at this stage: what happens if occupancy extends by four hours? What if equipment loads are 30% higher than assumed? Document the range, not just the headline number, and share it with the client.

Construction and Commissioning: Use platforms like QFlow or Fieldwire to track actual material deliveries and construction activities against model assumptions. Poorly commissioned HVAC controls remain one of the single largest contributors to the performance gap — making commissioning verification at practical completion non-negotiable, not optional.

Post-Occupancy (Years 1–3) — and the case for Digital Twins: This is the step that almost never happens systematically — and it’s the one that would most rapidly improve the industry’s collective accuracy. Mandate metered data collection from day one of occupation, and submit results to RIBA 2030 or Carbon Buzz benchmarking databases.

But the most transformative shift at this stage is the adoption of building Digital Twins — and the case for them has never been stronger. A Digital Twin creates a live, continuously updated virtual counterpart of the physical building, integrating BIM geometry, IoT sensor data, BMS feeds, smart meter readings, and weather data into a single environment. Rather than a static handover model that goes stale the moment occupation begins, a Digital Twin gives facility managers and energy engineers a single place to query actual performance, spot deviations from design intent, and test operational changes virtually before implementing them in the physical building. Digital twin frameworks enable automatic adjustments based on occupancy patterns, weather forecasts, and energy pricing signals, achieving energy efficiency improvements of 20–30% in many commercial buildings. A pilot study using IES’s Digital Twin technology demonstrated HVAC energy savings of up to 50% post-normalization by optimizing operation based on actual occupancy patterns rather than scheduled assumptions.

For the performance gap specifically, the Digital Twin closes the loop that current practice leaves open: design assumptions are tested against real data in near real-time, deviations are flagged automatically, and the calibrated model becomes the basis for the next design project rather than sitting in an archive. Unlike static asset ratings, operational Digital Twin assessments reflect actual energy use patterns influenced by occupant behavior, seasonal variations, and equipment performance — capturing the dynamic realities that planning-stage models simply cannot.

Resolving the performance gap also requires soft methods alongside technical ones: effective communication among building stakeholders, integrated project delivery models that ensure designers, contractors, and operators share accountability for actual performance — not just design-stage predictions.

The Standards Need to Catch Up

Here’s the harder conversation: the regulatory framework is structurally incentivizing inaccuracy. When compliance is demonstrated by a model rather than measured performance, the incentive to produce an optimistic model is baked in. SAP, SBEM, and the US LEED energy prerequisite all accept design-stage predictions as proof of compliance. NABERS in Australia and New Zealand is the notable exception — it rates buildings on actual measured energy consumption — and the performance outcomes in those markets are measurably better as a result.

The UK’s Soft Landings policy, now embedded in Government Construction Strategy, moves in the right direction by requiring post-occupancy evaluation on public sector projects. The CIBSE/Construction Innovation Hub Operational Energy and Carbon (OpEC) spreadsheet — freely available to all engineers and architects — tracks the impact of design changes on energy intensity from design through to early occupation, bridging the gap until Building Regulations catch up with net zero aspirations. But neither tool has the regulatory teeth to transform standard private sector practice.

What we actually need is a mandatory post-occupancy data submission requirement for buildings claiming net zero or high-performance certification — similar to how NABERS operates, embedded within the planning and Building Regulations framework. The technology to do this exists. Smart meters, BMS data exports, Digital Twins, and automated benchmarking platforms can make data collection close to frictionless. What’s missing is the regulatory mandate that makes actual performance — not predicted performance — the standard of proof.

Until that exists, the workaround is professional discipline: use the better tools, calibrate against real data, run sensitivity ranges rather than single-point estimates, and be honest with clients about the difference between a compliance model and a performance prediction.

The Approach the Industry Should Standardize

The single most important conversation in any project happens before the model is even opened. Sitting down with the owner at RIBA Stage 0 — genuinely sitting down, not sending a document for signature — and walking through what the energy targets mean in operational terms, what assumptions underpin them, and what the client’s actual occupancy patterns will look like, changes the entire trajectory of everything that follows.

This should be standard industry practice. Not the approach of a few progressive firms, but the baseline expectation from first appointment. How many hours will the building actually operate? Who manages the controls, and how? Are there server rooms, catering facilities, extended-hours trading floors? These aren’t awkward questions — they’re the questions that determine whether the model will be meaningful or decorative.

Projects where the brief said “net zero” and the client’s fit-out specification included 24-hour trading floors, server rooms, and catering facilities running at full capacity — none of which appeared in the planning model — aren’t rare. That’s not the client’s fault. It’s a briefing failure, and it’s the industry’s collective responsibility to ask the questions that surface those operational realities before the model locks them out.

The standards and frameworks documented in the EIR and BEP aren’t bureaucratic formality — they’re the design criteria every subsequent decision is measured against. Getting them right at the start is an investment that pays back many times over in avoided rework, honest client relationships, and buildings that actually perform.

The Bottom Line

We have better tools than ever to close the gap between predicted and actual energy performance. The methodology exists. The software exists. Digital Twins are making continuous performance monitoring genuinely practical. What’s needed now is the professional will to standardize this approach across practice — and the regulatory evolution to make measured performance, not modelled performance, the definition of compliance.

The buildings we design and build today will be operating for fifty, sixty, a hundred years. The gap between what we model and what we deliver isn’t just a technical footnote — it’s a direct contribution to the emissions overshoot that is driving climate change. We owe the people who occupy our buildings honest predictions. We owe the planet accurate performance.

That’s reason enough to fix this.